About Us

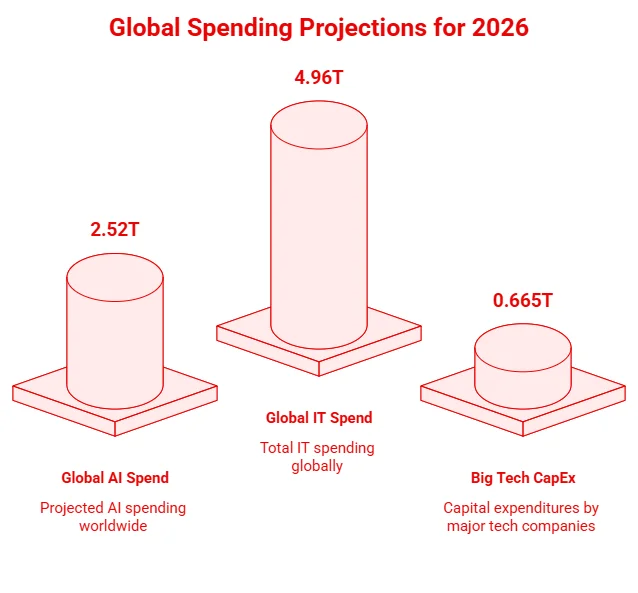

Artificial Intelligence is no longer a pilot project; it’s a $2.52 trillion industry in 2026, with Big Tech investing $650 billion and enterprise GenAI spending soaring from $11.5B in 2024 to $37B in 2025. Recent AI cloud cost statistics show that 80% of companies exceed AI cost forecasts by 25%+, and training a top-tier LLM can still cost up to $192M.

For CEOs, CFOs, and CTOs, the question isn’t adoption, it’s how to scale AI profitably while navigating unprecedented costs and energy consumption, with AI workloads already using 1.5% of global electricity. Hybrid Cloud, projected at 90% adoption by 2027, is becoming critical for cost-efficient AI infrastructure. For companies ready to implement scalable AI solutions, AppVerticals’ AI development services turn insights into actionable, cost-efficient systems

AI cloud is no longer experimental; it’s becoming a core part of enterprise infrastructure. While total global AI spending is projected at $2.52 trillion in 2026, a substantial portion is directed toward cloud-based compute, storage, and managed AI services.

Cloud adoption is now critical for scaling AI models efficiently, with organizations committing significant operational expenditure (OpEx) to run production workloads and manage compute-intensive tasks.

The cost of running AI models varies by provider and workload, with training, inference, fine-tuning, and storage each contributing differently. Below is a breakdown of average expenses across these categories.

| Cost Category | Range / Metric | Primary Driver |

|---|---|---|

| LLM Training (Frontier) | $78M – $192M+ | Compute Duration & Cluster Size |

| GPU Inference | $0.02 – $0.50 per 1M tokens | Model Latency & Batch Size |

| Fine-Tuning | $5,000 – $150,000 | Dataset Size & Epochs |

| Storage (High Perf) | $0.10 – $0.30 per GB/mo | Training Checkpoints & Data Lakes |

These costs highlight how different factors, from compute intensity to data size, drive AI spending, helping organizations plan and optimize their cloud budgets. With costs like these, businesses often partner with AppVerticals to build AI workflows that maximize ROI

However, once monthly inference exceeds ~$50,000, it often becomes more cost-effective to move from managed APIs (like GPT-4) to self-hosted GPU clusters.

Training large language models remains extremely costly, with top-tier frontier models requiring tens to hundreds of millions of dollars in computing and related expenses.

Beyond compute, human data annotation for high-quality RLHF often surpasses compute costs. GPU rental and storage also add significantly to the total spend.

| Cost Component | Typical Range / Notes | Key Drivers |

|---|---|---|

| Frontier LLM Training (Compute) | $78M – $192M+ | Cluster size, training duration |

| Human Data Annotation (RLHF) | Often exceeds compute costs | Quality & volume of labeled data |

| GPU Rental (H100/H200) | $2 – $13+ per GPU hour | Spot vs. reserved pricing, term commitment |

| High-Performance Storage | $0.10 – $0.30 per GB/month | Training checkpoints & datasets |

This breakdown highlights why training frontier LLMs is largely limited to organizations with massive budgets, and why infrastructure, human labeling, and storage all play crucial roles in total costs.

Turn high AI cloud costs into efficient products, from AI MVPs to full-scale enterprise apps.

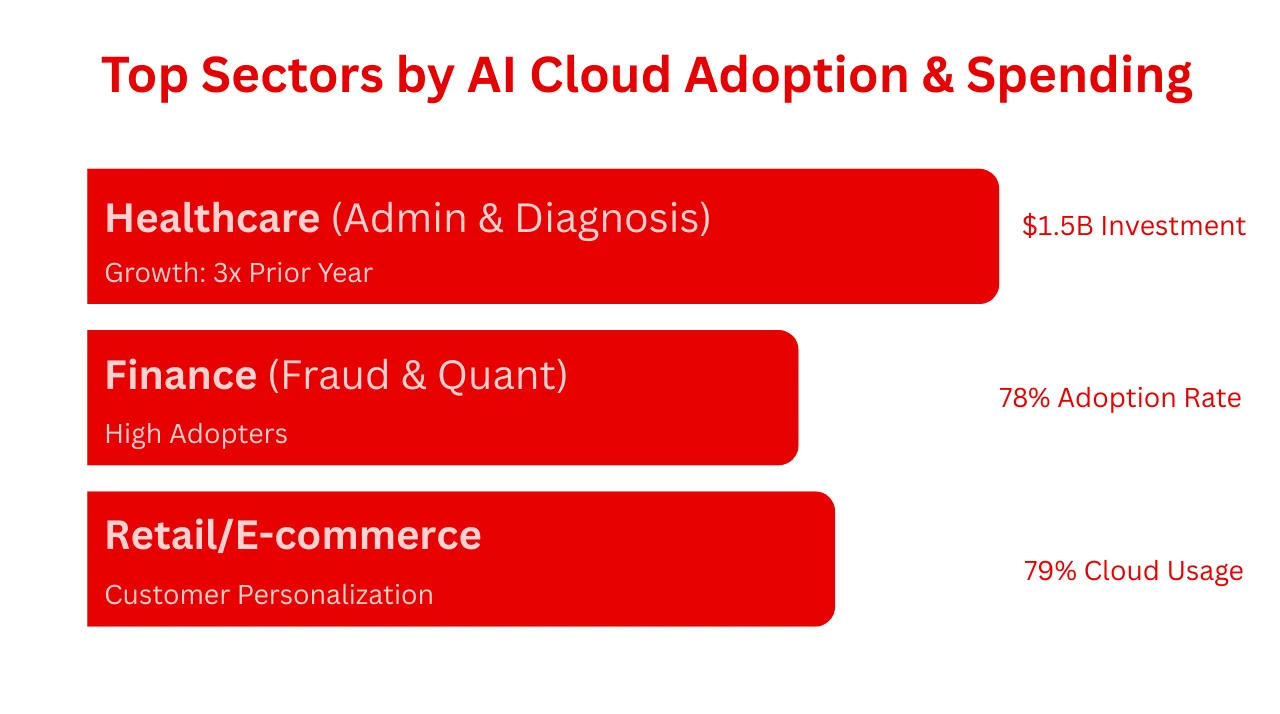

The table below shows how key industries are adopting AI cloud technologies.

| Industry | Key AI Focus | Key Stat |

|---|---|---|

| Healthcare | Administrative automation, diagnostics | $1.5B investment (3× YoY growth) |

| Finance | Fraud detection, quantitative analysis | 78% AI adoption rate |

| Retail & E-commerce | Personalization, inventory prediction | 79% cloud usage |

Healthcare leads with $1.5B invested, tripling prior-year growth. This specific sector is driven by efficiency needs in the $740 billion annual healthcare administration market. The AI in healthcare market is projected to hit $419.56 billion by 2033.

Beyond healthcare, the banking, software, and retail sectors remain the top spenders, collectively investing $190 billion in public cloud services.

The physical demand of AI workloads is tangible: data centers now consume around 1.5% of global electricity, a figure that continues to rise.

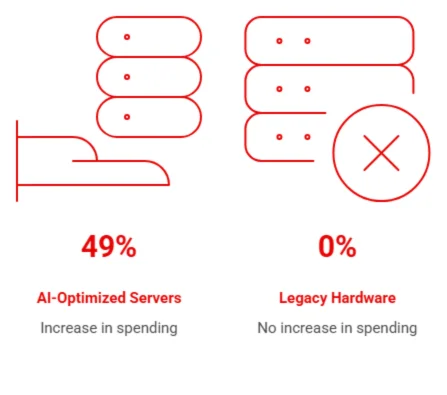

Gartner reports a 49% increase in spending on AI-optimized servers, as legacy hardware cannot handle the thermal and computational requirements of modern transformer models.

Product-Led Growth (PLG) adoption, where employees individually sign up for tools, captures 27% of AI app spend, creating “Shadow AI” costs that are hard for CFOs to track.

The global appetite for GenAI is fueling massive cloud spending. In 2025, generative AI spending is projected to reach $644 billion, with the application layer alone growing 5.3x year-over-year to $19 billion.

Optimization is no longer optional; it is a survival mechanism. Statistics show that 78% of organizations are making cloud cost optimization their top priority. When done right, the payoff is substantial, with the average cloud ROI hitting $3.86 for every $1 invested.

Optimization Checklist

Expert Opinion:

The biggest mistake teams make with AI cloud costs is optimizing too late. By the time your GPU bill is painful, you’ve already baked inefficiency into your architecture. Start with model selection — not every task needs a 70B parameter model. Right-size your inference instances based on actual latency requirements, not worst-case assumptions. Use spot instances for training workloads (they’re interruptible by design anyway). And implement automated scaling that ties compute to demand, not to what you provisioned six months ago. The goal isn’t spending less — it’s spending intentionally. Performance and cost efficiency aren’t opposites. They’re both symptoms of good engineering discipline.

FinOps is evolving to meet the AI challenge. Currently, 63% of FinOps practitioners are managing AI spending, a massive jump from just 31% the previous year.

The primary focus for 50% of these teams is workload optimization, ensuring that the code running on expensive GPUs is efficient. According to McKinsey, organizations adopting these practices typically see a payback period of 1-3 years.

With 67% of CIOs prioritizing cost optimization and 59% of CTOs using multicloud strategies for security and leverage, automated policy enforcement is the only way to maintain governance.

To address this, Fairtility implemented a structured FinOps optimization strategy that included:

After these changes, the company reduced cloud costs by 26% without impacting AI performance. It also gained better visibility into resource usage, improving forecasting and budget control.

The case shows that AI cost savings often come from smarter infrastructure and financial visibility, not from cutting workloads.

Looking toward 2030, the trajectory is vertically upward. Cloud revenues are poised to reach $2 trillion by 2030, fueled largely by the AI rollout.

However, this growth comes with an energy price tag; AI processing is expected to account for 20% of all power use by 2028.

To mitigate costs and risks, enterprises are diversifying. 89% of organizations now use a multicloud strategy, and 80% utilize multiple public or private clouds.

The trend is clearly moving toward Hybrid Cloud, with adoption expected to reach 90% by 2027.

This strategy allows companies to keep sensitive, steady-state AI workloads on cheaper, private infrastructure while bursting to the public cloud for peak training needs.

Effective decision-making requires accurate forecasting, yet this remains a major pain point. A concerning 80% of companies miss their AI forecasts by more than 25%.

The data for 2026 is clear: AI is fueling a historic expansion in cloud infrastructure, with spending hitting $2.52 trillion amid 44% YoY growth.

Yet, the path to value is fraught with financial peril. With 80% of companies missing their forecasts and a growing trend of 67% of organizations considering repatriation of workloads to hybrid environments, the era of “growth at all costs” is over.

Having worked with hundreds of organizations navigating this transition, my advice to CFOs and CTOs is simple: treat AI compute as a finite, precious resource, not an infinite utility. The winners in 2026 won’t just be the companies with the smartest models; they will be the companies with the smartest FinOps strategies.

Discover how our team can help you transform your ideas into powerful Tech experiences.